Graph Neural Networks (GNNs) are a rapidly advancing field in machine learning, specifically designed to analyze graph-structured data representing entities and their relationships. These networks have been widely used in social network analysis, recommendation systems, and molecular data interpretation applications. A subset of GNNs, Attention-based Graph Neural Networks (AT-GNNs), employs attention mechanisms to improve predictive accuracy and interpretability by emphasizing the most relevant relationships in the data. However, their computational complexity poses significant challenges, particularly in utilizing GPUs efficiently for training and inference.

One of the significant issues in AT-GNN training is the inefficiency caused by fragmented GPU operations. The computation involves multiple intricate steps, such as calculating attention scores, normalizing these scores, and aggregating feature data, which require frequent kernel launches and data movement. Existing frameworks must adapt to real-world graph structures’ heterogeneous nature, leading to workload imbalance and reduced scalability. The problem is further exacerbated by super nodes—nodes with unusually large neighbors—which strain memory resources and undermine performance.

Existing GNN frameworks, such as PyTorch Geometric (PyG) and the Deep Graph Library (DGL), attempt to optimize operations using kernel fusion and thread scheduling. Techniques like Seastar and dgNN have improved sparse operations and general GNN workloads. However, these methods rely on fixed parallel strategies that cannot dynamically adapt to the unique computational needs of AT-GNNs. For example, they need help with mismatched thread utilization and fully exploit the benefits of kernel fusion when faced with graph structures containing super nodes or irregular computational patterns.

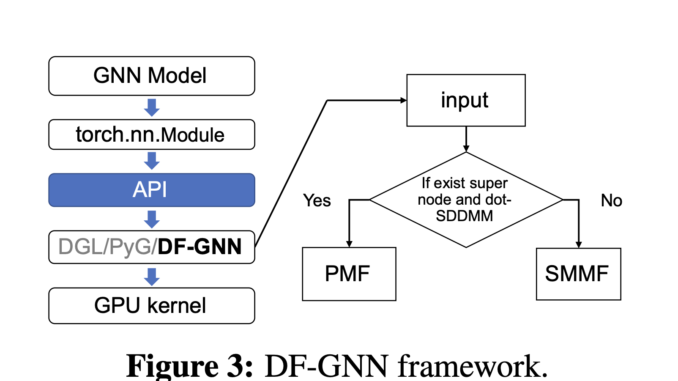

The research team from Shanghai Jiao Tong University and Amazon Web Services proposed DF-GNN, a dynamic fusion framework explicitly designed to optimize the execution of AT-GNNs on GPUs. Integrated with the PyTorch framework, DF-GNN introduces an innovative bi-level thread scheduling mechanism that enables dynamic adjustments to thread distribution. This flexibility ensures that operations like Softmax normalization and sparse matrix multiplications are executed with optimal thread utilization, significantly improving performance. DF-GNN addresses inefficiencies associated with static kernel fusion techniques by allowing different scheduling strategies for each operation.

DF-GNN employs two primary fusion strategies: Shared Memory Maximization Fusion (SMMF) and Parallelism Maximization Fusion (PMF). SMMF consolidates operations into a single kernel, optimizing memory usage by storing intermediate results in shared memory, thereby reducing data movement. Conversely, PMF focuses on graphs with super nodes, where edge-parallel strategies outperform node-parallel ones. Further, the framework introduces tailored optimizations such as warp-balanced scheduling for edge computations, redundancy-free Softmax to eliminate repeated calculations, and vectorized memory access to minimize global memory overhead. These features ensure efficient forward and backward computations processing, facilitating end-to-end training acceleration.

Extensive evaluations demonstrate DF-GNN’s remarkable performance gains. On full graph datasets like Cora and Citeseer, DF-GNN achieved an average speedup of 16.3x compared to the DGL sparse library, with peak improvements of up to 7x on kernel operations. On batch graph datasets, including high-degree graphs like PATTERN, it provided an average speedup of 3.7x, surpassing competitors like cuGraph and dgNN, which achieved only 2.4x and 1.7x, respectively. Furthermore, DF-GNN exhibited superior adaptability on super node-laden datasets like Reddit and Protein, achieving an average 2.8x speedup while maintaining robust memory utilization. The bandwidth utilization of the framework remained consistently high, ensuring optimal performance across graph sizes and structures.

Beyond kernel-level improvements, DF-GNN also accelerates end-to-end training workflows. In batch graph datasets, it achieved an average speedup of 1.84x for complete training epochs, with individual forward pass improvements reaching 3.2x. The speedup extended to 2.6x in full graph datasets, highlighting DF-GNN’s efficiency in handling diverse workloads. These results underline the framework’s ability to adapt dynamically to different computational scenarios, making it a versatile tool for large-scale GNN applications.

In tackling the inherent inefficiencies of AT-GNN training on GPUs, DF-GNN introduces a well-rounded solution that dynamically adapts to varying computation and graph characteristics. By addressing critical bottlenecks such as memory utilization and thread scheduling, this framework sets a new benchmark in GNN optimization. Its integration with PyTorch and support for diverse datasets ensure broad applicability, paving the way for faster, more efficient graph-based learning systems.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter.. Don’t Forget to join our 55k+ ML SubReddit.

🎙️ 🚨 ‘Evaluation of Large Language Model Vulnerabilities: A Comparative Analysis of Red Teaming Techniques’ Read the Full Report (Promoted)

Nikhil is an intern consultant at Marktechpost. He is pursuing an integrated dual degree in Materials at the Indian Institute of Technology, Kharagpur. Nikhil is an AI/ML enthusiast who is always researching applications in fields like biomaterials and biomedical science. With a strong background in Material Science, he is exploring new advancements and creating opportunities to contribute.

Be the first to comment